Platform

When working on NLP projects, you typically need to run lots of things: data processing, annotation, training, evaluation, testing and many more iterative steps. This is hard to solve with only a coding assistant. Ellf comes with a platform that makes it easy to run and monitor your different processes, locally and in the cloud, and collaborate on them with your team.

Projects

Projects let you group tasks, actions, agents and services together within your organization, and manage user access and permissions on a per-project basis. For example, if you’re working on a new model or component, you would typically create a project for it and create all related tasks and actions within that project. You can manage projects in the web UI or via the ellf projects CLI.

Within a project, you can create, run and manage tasks, actions, agents and services:

Annotate entitiesSTARTED

Train a pipelineSTOPPED

Auto-labeler for NERSTARTED

Generate PatternsSTARTING

When using the CLI, you typically want to use config project to set the default working project, so you won’t have to specify the --project argument every time you run commands. You can also define the working task, action, agent or service this way. In the web app, the command bar at the top shows the CLI command that corresponds to the current page or view, making it easy to switch between using the app and CLI.

Project plans

Projects also contain plans created by Ellf’s project planning module. These are Markdown documents that are continuously updated and outline the end-to-end development plan, including step-by-step workflows and higher-level strategic goals. Ellf refers to the project plan across its different modules and also uses it to keep the in-app chat and your local coding assistant in sync.

NLP Project Plan: Fraud Report Classifier

Problem Statement

Build a pipeline that processes analyst-written fraud investigation summaries and produces four outputs: fraud type (multi-class, 6 labels), affected product, urgency level, and legal escalation flag. Urgency and legal escalation are derived from business rules applied downstream of model predictions — not model outputs themselves. Starting from scratch on annotation.

Pipeline Overview

Analyst report text

│─→ [Fraud Type Classifier] ← supervised textcat, 6-class exclusive

|─→ [Social Engineering Detector] ← supervised textcat, binary

|─→ [Product Extractor] ← rules (PhraseMatcher on known product names)

|─→ [Amount Extractor] ← regex / MONEY NER for dollar amounts

|─→ [Business Logic Layer] ← NOT a model

|─→ urgency: fraud_type × amount × product → policy thresholds

|─→ escalate: urgency == HIGH → legal team routing

Key architecture decision: Urgency and legal escalation are policy decisions, not language-understanding tasks. Internal thresholds ($100K, insider fraud type) are encoded as rules owned by the fraud team, not embedded in model weights.

Components

| Component | Approach | Rationale |

|---|---|---|

| Fraud type | Supervised textcat, 6-class exclusive | Core NLP task; domain-specific; needs training |

| Social engineering vector | Supervised textcat, binary | Method flag separate from outcome type; binary is fast to annotate |

| Product extraction | PhraseMatcher rules | Analyst reports name products explicitly; rules are fast and auditable |

| Amount extraction | Regex / spaCy MONEY entity | Structured format; rules are sufficient |

| Urgency | Business logic rules | Thresholds ($100K, fraud type) are policy, not language |

| Legal escalation | Business logic rules | Derived from urgency; policy-owned |

Data Strategy

- Source: Analyst-written fraud investigation summaries (free text, high quality, domain-consistent language)

- No existing labels — annotating from scratch

- insider_fraud and insurance_fraud estimated at 10–15% each — random sampling sufficient

Annotation Plan

Fraud type classifier

- Recipe:

textcat.correctwith LLM pre-annotation - Labels:

account_takeover,money_laundering,card_fraud,application_fraud,insider_fraud,insurance_fraud(exclusive) - Target volume: 400–500 annotated examples

- Evaluation split: Set aside ~100 examples before annotation starts (document-level split)

- Pilot first: Annotate 50–75 manually (no LLM). Fix schema before scaling.

Social engineering vector

- Recipe:

textcat.binary - Label:

social_engineering_vector(true/false) - Pass: Second pass after fraud type annotation is stable

- Target volume: 200–300 examples

Schema decision

social_engineeringis a method, not an outcome — removed from fraud type labels- Added as a separate binary attribute to avoid confusable label pairs

Evaluation Strategy

Test set

- Hold out ~100 examples before any annotation begins (document-level split)

- Never used in training; kept constant as the ground truth benchmark

Metrics

- Per-class F1 for all 6 fraud types — do not rely on macro average alone

- insider_fraud and insurance_fraud tracked separately

- Confusion matrix — expected confusables: card_fraud ↔ account_takeover, application_fraud ↔ money_laundering

Baselines

- Most-frequent-class baseline before any model evaluation

Training curves

- Train on 25/50/75/100% after each annotation batch

- Rising at 100% → annotate more; flat → investigate schema or architecture

Memorisation check

- Train on pilot examples, evaluate on those same examples — must be near-perfect

Roadmap

| Phase | What | Output |

|---|---|---|

| 1 — Pilot | Read reports, write guidelines, annotate manually | Stable schema + 75 examples |

| 2 — Baseline | Train first model, memorisation check | Go/no-go on schema |

| 3 — Scale annotation | LLM-assisted textcat.correct to 400–500 examples | Training dataset |

| 4 — Train & evaluate | Full training run, per-class F1, error analysis | v1 fraud type model |

| 5 — SE vector pass | Binary annotation pass + training | v1 SE vector model |

| 6 — Rules layer | Product PhraseMatcher, amount regex, urgency/escalation rules | Complete pipeline |

Next Steps

- Hold out ~100 docs as the test set before touching any annotation tool

- Read 50–75 reports manually to validate the schema

- Write one-page annotation guidelines with label definitions and tiebreaker rules

- Annotate the pilot batch using the

textcatrecipe - Train a quick baseline and run the memorisation check

- If training curves are rising, scale with LLM-assisted annotation

- Build the rules layer (product PhraseMatcher, amount regex) in parallel

Decision Log

- Urgency: Business logic. Thresholds ($100K, insider fraud type) are policy rules.

- Legal escalation: Business logic derived from urgency. Policy-owned.

- Product extraction: PhraseMatcher rules first. Analyst reports name products explicitly.

- Fraud type: Supervised textcat, 6-class exclusive. Core NLP task.

- Social engineering: Binary vector flag, not a fraud type label. It's a method, not an outcome.

- Evaluation-first: 100-doc test set held out before annotation begins.

You can view project plans in the UI via the Plans page or using the plans CLI. Plans are also versioned with tracked changes as the agent or user makes edits to them.

Chat with Ellf

The platform includes a chat widget for chatting with Ellf from any point in the app. The agent is able to perform common actions and navigate the app for you. Typing @ lets you reference available resources, including tasks, actions, agents, assets, datasets and registered users in your organization.

Things the in-app chat can do include:

- answer questions about project planning and NLP strategy

- review the project and suggest next steps, improvements or ideas

- pre-populate the form for creating new tasks, actions, agents and services

- start and stop tasks, actions, agents and services

- assign agents and users to tasks

- compute statistics about data and running jobs

- navigate to specific pages and sub-pages

When you ask the agent to create, run or delete things for you, it will ask for an additional confirmation. Under the hood, it will perform actions under your user account, which means that the agent only ever has access to what the user can access.

Performing actions via the chat with confirmation

Start @Actions/Company Reports Training and show me the logs.

Handover to coding assistant

At some point during a conversation, the agent may need to go back to developing locally and writing code. In that case, you can hand over the conversation to your coding assistant like Claude Code via the /ellf-todo command.

Handover from in-app chat to Claude Code

Sure! Here’s the handoff summary:

| Request ID | 523060d8-b2db-4fd5-b541-5ee119007501 |

| Status | planned |

| Description | Write loader.py for documents from data warehouse |

In your local Claude Code, run /ellf-todo to pick it up – it will load our full conversation context automatically.

› /ellf-todo ✔ Successfully updated coding request 523060d8-b2db-4fd5-b541-5ee119007501⏺︎Context summary: Phase 1 of an e-commerce aspect extraction project. The task is to write loader.py for the internal data warehouse API that segments sentences and yields {id, text, date} dictionaries.This will load the conversation and continue it in your coding assistant with the ability to create files and develop code. By default, it will pick up and use the latest conversation, but you can also call the command explicitly with the conversation ID or select from the available past conversations.

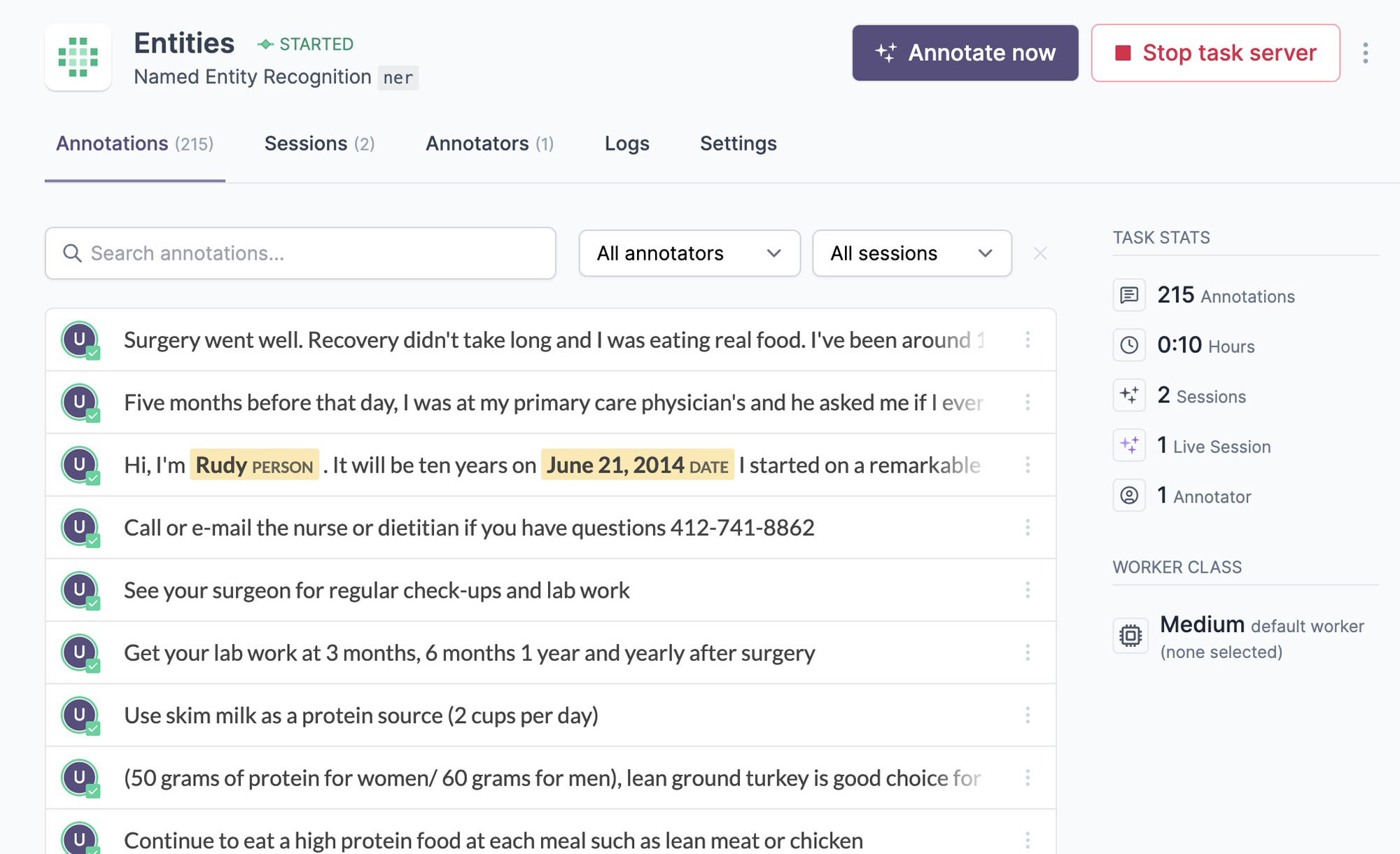

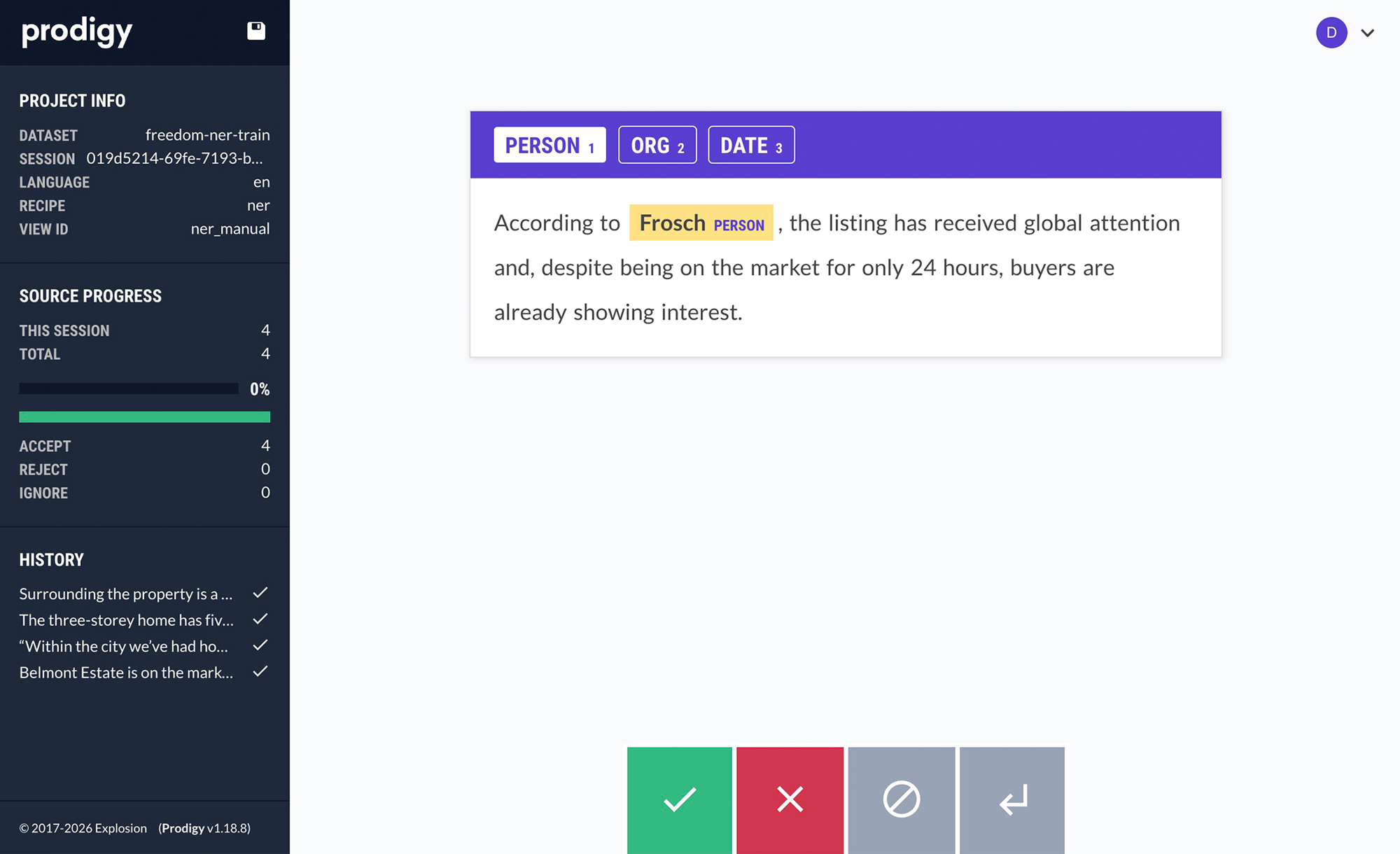

Annotation

Ellf ships with our popular annotation solution Prodigy for efficient data labelling, and brings it into a collaborative cloud environment. When you start a task, Ellf will spin up the annotation server using one of the available recipes for NLP tasks like span annotation, text classification or relation extraction. You can then assign human annotators as well as automated annotation agents, and view their progress in the app and on the CLI using the tasks command. The annotation process runs entirely on your cluster, so your data stays fully private and under your control.

Annotate entitiesSTARTED

Annotate categoriesSTOPPED

The app shows you an overview of your available tasks, running and completed annotation sessions, as well as the created data. To create annotations yourself, you can click the Annotate now button on the task page. Annotators assigned to a task are able to access the annotation UI only.

Ellf’s data annotation module also makes your coding assistant proficient at annotation best practices and helps you design your label scheme, structure your annotation task and configure the right annotation interface. The Prodigy module knows Prodigy’s developer API and helps you implement fully custom workflows and interfaces.

Prodigy documentation

Annotation interfaces

User management

Organizations are your top-level account for Ellf and include your team members and projects. You’d typically have one organization for your company, although it’s also possible to have multiple orgs for different groups and departments if you need more fine-grained access control. To invite users to your organization, you can click SettingsInvite in the platform.

Roles and Permissions

Ellf provides three basic roles for users: Administrator, who has access to everything and can manage the organization, Developer, who has access to everything needed for working with and customizing Ellf, and Annotator, who only has access to the annotator dashboard and annotation UI for projects and tasks they’re added to.

| Administrator | Developer | Annotator | |

|---|---|---|---|

| Manage organization and billing | |||

| Invite developers and annotators | |||

| Access projects dashboard | |||

| Access annotator dashboard | |||

| Manage all projects | |||

| Manage project they’re in | |||

| Create and manage tasks, actions, agents and services | |||

| Upload data and code to cluster | |||

| Manage data and code on cluster | |||

| Add new clusters | |||

| Create annotations |

About developer access to the cluster

Developers need to be able to upload code to the cluster and run it, so there’s no point in restricting their access within the cluster, since this could be circumvented by code anyway. If you need more fine-grained permissions for different cluster resources, you can set up and connect multiple clusters.

The in-app chat agent will perform all actions as the currently authenticated user, so it is only able to do what the user has access to.